Understanding Website Behaviour Through a Transition Matrix

Author: T.G. Barker | How Google Evaluates Websites | Last reviewed: 14/05/2026.

How Internal Models Shape Search Interpretation

Most website owners think in terms of pages, rankings, keywords, and backlinks. Search systems do not. Modern search systems increasingly evaluate websites as behavioural structures made up of repeated transitions, pathways, and reinforcement patterns. Over time, these repeated pathways form a stable internal model of the website. This model influences how search engines interpret authority, importance, relevance, and user satisfaction.

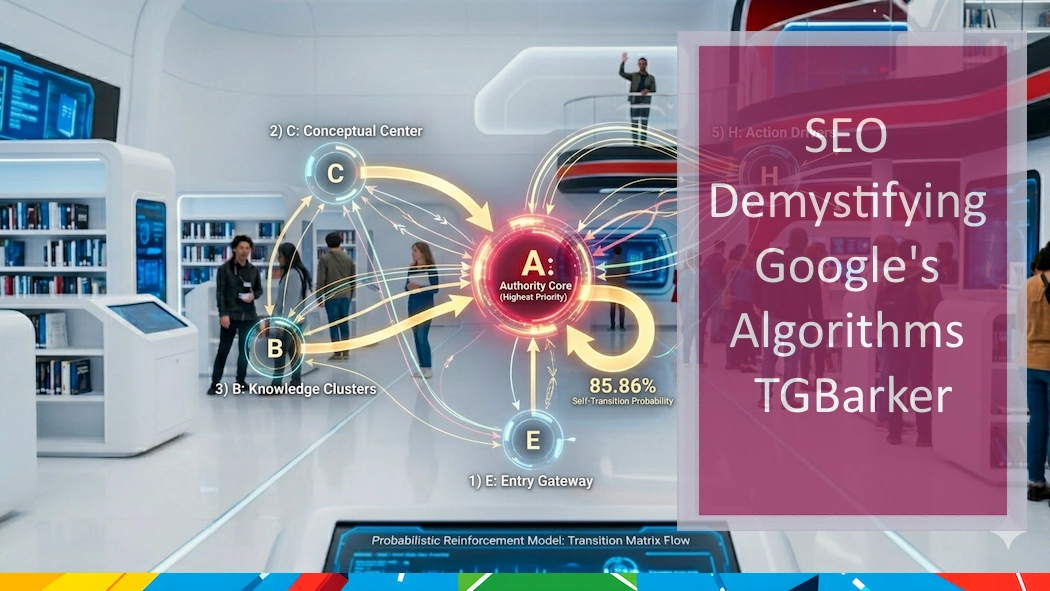

How Transition Matrices Reveal Structural Website Behaviour

One way to observe this behaviour is through a transition matrix. A transition matrix does not simply record traffic. Instead, it measures the probability of movement between different structural states of a website. In practical terms, it answers a fundamental question: if a visitor or crawler is currently in one area of the website, where are they most likely to go next?

This is closely related to probabilistic modelling and Markov chain analysis, where future movement depends on the current state rather than the full historical path. Search systems such as Google’s PageRank model are built upon similar concepts involving probability, pathways, and structural reinforcement. An authoritative overview of this type of probabilistic web analysis can be found in the PageRank model.

The Transition Matrix

| From / To | E Entry Pages |

C Commercial Pages |

B Supporting Content |

A Authority Core |

H High-Value Pages |

S Structural Pages |

F | X External / Exit |

|---|---|---|---|---|---|---|---|---|

| E | 0.7518 | 0.0061 | 0.0355 | 0.1772 | 0.0125 | 0.0057 | 0.0010 | 0.0102 |

| C | 0 | 0.4453 | 0.0040 | 0.5101 | 0 | 0 | 0.0263 | 0.0142 |

| B | 0 | 0 | 0.5618 | 0.4103 | 0.0093 | 0.0163 | 0 | 0.0023 |

| A | 0 | 0.0071 | 0.0603 | 0.8586 | 0.0052 | 0.0121 | 0.0009 | 0.0558 |

| H | 0.0017 | 0 | 0.0017 | 0.1269 | 0.2098 | 0.0068 | 0.0017 | 0.6514 |

| S | 0 | 0 | 0.0128 | 0.3910 | 0.0064 | 0.4231 | 0 | 0.1667 |

| F | 0 | 0 | 0 | 0.2400 | 0.0400 | 0 | 0.6800 | 0.0400 |

| X | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 1.0000 |

The Authority Core Has Become Structurally Dominant

The most striking feature of this matrix is the dominance of the Authority Core state (A). Multiple pathways throughout the site consistently converge toward this state. Commercial pages, supporting content, structural pages, and framework content all repeatedly route back toward the same authority cluster.

The most important figure is the self-transition probability:

P(Xn+1 = A | Xn = A) = 0.8586

This value is extremely high. It means that once users or crawlers enter the Authority Core, they overwhelmingly remain within that cluster of pages. In practical terms, the system repeatedly reinforces the same conceptual pathways. Certain pages become recognised as structurally central and continue attracting repeated transitions.

This reflects one of the most important realities in modern search behaviour: rankings are not fixed positions. They emerge from stable reinforcement patterns inside a probabilistic system. Over time, search systems stop evaluating pages individually and instead begin evaluating the structural relationships between them.

Commercial Pages Depend on Conceptual Authority

The Commercial Pages state reveals another important insight. Commercial content is not operating independently. Instead, it depends heavily on the Authority Core for reinforcement.

Structurally, the website is signalling that trust and visibility originate from conceptual authority rather than direct sales content. This is increasingly important in AI-driven search systems where authority is established through topic ownership, structural clarity, and repeated semantic reinforcement rather than isolated commercial optimisation.

Understanding these relationships is central to how authority flows through a website structure and how search systems learn which areas deserve reinforcement.

Supporting Content Has Become Self-Reinforcing

The Supporting Content state also displays strong internal reinforcement. Supporting pages repeatedly connect to one another while simultaneously feeding the Authority Core. Over time, this forms what can be described as a semantic reinforcement network or knowledge cluster.

Search systems favour this type of behaviour because it creates predictable topical pathways. It signals that the website consistently reinforces the same concepts, relationships, and subject areas across multiple pages.

However, there is also a hidden risk. Once reinforcement becomes too stable, the search system may conclude that it already fully understands the website. At that point, new interpretations become more difficult to establish.

High-Value Pages Behave Like Endpoints

The High-Value Pages state contains one of the most revealing behaviours in the matrix. Once visitors reach these pages, the journey frequently terminates.

Users either leave the site or the crawl process ends. This is not automatically negative. In many cases, it indicates successful intent satisfaction. The page acted as a final destination or resolution point. However, structurally it also means these pages are not redistributing authority effectively back into the wider site.

They behave more like destination nodes than routing nodes.

The Homepage Remains a Major Entry Stabiliser

The Entry Pages state demonstrates unusually high self-reinforcement. This suggests repeated homepage visits, repeated crawler refreshes, or consistent re-entry into the same gateway pages.

However, there is also a significant transition into the Authority Core. This means entry-level traffic is already being channelled toward conceptual authority pages. The homepage is functioning as a structural distributor rather than existing in isolation.

This aligns closely with the idea that Google evaluates websites as connected systems rather than collections of independent pages.

Structural Stability and Plateau Formation

Perhaps the most important insight from the entire matrix is the degree of structural stability that has formed. The website is no longer behaving like a traditional collection of SEO pages. Instead, it behaves more like a conceptual graph network where repeated transitions continually reinforce the same structural interpretation.

In probabilistic systems, highly stable self-transition probabilities create semi-absorbing states. These are areas of the system that repeatedly attract and retain movement.

That stability can be extremely valuable because it strengthens authority, reinforces topical ownership, and improves interpretative consistency. However, it also introduces the possibility of structural lock-in.

Once a search system settles on a stable interpretation, additional optimisation activity may simply reinforce the existing model rather than change it. This is one of the key reasons many websites experience ranking plateaus despite ongoing SEO activity.

Understanding this behaviour is central to why SEO progress often plateaus and why changing rankings often requires structural change rather than additional optimisation.

Search Systems Learn Pathways, Not Just Pages

The most important lesson from this transition matrix is that search systems increasingly learn pathways rather than isolated content. They observe repeated transitions, reinforced entry points, authority concentrations, behavioural loops, and structural endpoints.

Over time, these pathways form a stable probabilistic interpretation of the website.

This means that improving rankings is often less about isolated optimisation tasks and more about changing the pathways the system repeatedly observes. Structure, authority flow, content relationships, behavioural reinforcement, and repeated transitions all contribute to how the model eventually stabilises.

In many cases, the challenge is not visibility itself. The challenge is persuading the system to form a different interpretation.