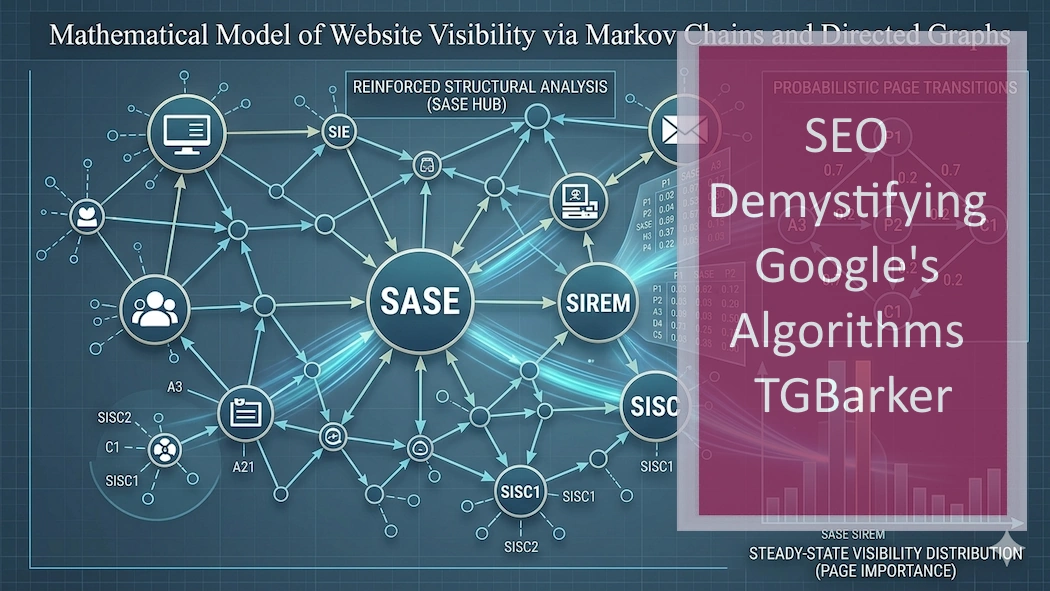

The Markov Model of Website Structure and Search Visibility

Ranking as mathematical outcome of your website. The mathematical foundation behind Google’s original PageRank system is known as the Random Surfer model.

Ranking as mathematical outcome of your website. The mathematical foundation behind Google’s original PageRank system is known as the Random Surfer model.

Author: T.G. Barker | How Google Evaluates Websites | Last reviewed: 06/06/2026.

Search systems do not evaluate websites in the same way a human reader does. Instead of reading a page and forming an opinion about it, search engines analyse websites as structured networks of pages and links. The behaviour of that network can be described mathematically.

Understanding Your Website as a System This review is grounded in a structured understanding of how search systems interpret websites — how authority flows, how structure is evaluated, and how stable visibility is formed over time:

One of the most useful ways to understand this process is through the concept of a Markov Chain. In this model, every page on a website represents a state, and every internal link represents a transition between states. Over time, the structure of these transitions determines where attention within the system naturally accumulates.

This perspective explains why some websites continue to grow in visibility while others plateau despite ongoing SEO activity. The issue is often not content quality or keyword targeting, but the way authority flows through the site itself.

For a broader explanation of how search systems interpret websites as structured entities, see How Google Evaluates Websites.

The mathematical foundation behind Google’s original PageRank system is known as the Random Surfer model. Imagine a visitor arriving on a website and following links at random from one page to another. Over time, this behaviour settles into what mathematicians call a stationary distribution.

In practical terms, this means that some pages naturally become more likely destinations within the network. These pages accumulate probability because of their position in the site’s link structure.

Search systems use similar probability-based models to interpret which pages within a website appear structurally central. A page does not become influential simply because it exists. Its position within the network determines how often a theoretical user would encounter it.

From a mathematical perspective, a website can be represented as a directed graph. Each page acts as a node, while each internal link forms an edge connecting one node to another.

Graph theory provides tools for analysing how influence spreads through these networks. Concepts such as centrality, connectivity, and probability distribution help explain why certain pages become dominant while others remain peripheral.

Many websites unintentionally create structures where large portions of their content primarily link to each other without directing attention toward the pages that represent the site’s main purpose. When this occurs, search systems may interpret the site as informational rather than authoritative on a specific topic.

This structural perspective is explored further in Structural Authority Flow in Search Systems.

A common situation occurs when a website accumulates a large amount of supporting or informational content. These pages link extensively to each other, forming dense clusters within the site’s internal link graph.

Within a Markov model, this creates what mathematicians sometimes describe as a high self-transition probability. Once a crawler or theoretical user enters that cluster, the probability of remaining within it becomes very high.

In practical terms, this means search systems repeatedly encounter the same informational pages while rarely transitioning toward the pages that define the site’s core focus.

The result is a structural imbalance. The site may appear highly informative, yet the system has limited signals indicating which pages represent the central authority of the website.

Because search systems interpret websites as interconnected graphs, the distribution of links plays a critical role in shaping how a site is understood. Pages that receive links from multiple important nodes gradually accumulate structural influence within the network.

This does not happen through manipulation or isolated optimisation efforts. Instead, it emerges from the overall architecture of the site and the relationships between its pages.

Understanding these relationships is often the first step toward explaining why two websites with similar content can perform very differently in search visibility.

The diagnostic process used to examine these structural patterns is described in more detail on the How the Strategic Search Authority Review Works page.

Markov models allow analysts to calculate how probability flows through a website’s internal link structure. By analysing transition matrices and stationary distributions, it becomes possible to identify which pages naturally accumulate influence within the network.

This type of analysis does not attempt to manipulate search systems. Instead, it provides insight into how those systems already interpret the structure of a website.

When organisations reach a point where further SEO activity produces little movement, structural analysis can often reveal why. The issue is rarely the absence of content. More often, it is a question of how the site’s architecture communicates importance.

Details of how this diagnostic work is delivered can be found on the Strategic Search Authority Review pricing page.

Modern search systems incorporate far more signals than the early PageRank model alone. Machine learning systems now analyse language, context, entities, and user behaviour.

However, the structural foundations of the web have not changed. Websites remain networks of connected documents, and probability-based models remain one of the clearest ways to understand how influence spreads through those networks.

For organisations trying to understand why search visibility grows, stalls, or shifts unexpectedly, examining the underlying structure of a website often provides clearer answers than focusing solely on individual pages.

As search systems evolve toward entity understanding and AI-assisted interpretation, the importance of clear structural signals is likely to increase rather than diminish.

Websites that communicate authority through coherent internal structures are easier for both crawlers and AI systems to interpret. In contrast, fragmented structures often leave search systems uncertain about which pages represent the core focus of the site.

Understanding these dynamics helps explain why search visibility is rarely determined by isolated optimisation techniques. It is shaped by how the entire website functions as a connected system.