How Google Ranks Search Results

The hidden model behind your website’s rankings. Rankings are probabilistic

Author: T.G. Barker | How Google Evaluates Websites | Last reviewed: 20/05/2026.

The hidden model behind your website’s rankings. Rankings are probabilistic

Author: T.G. Barker | How Google Evaluates Websites | Last reviewed: 20/05/2026.

Search rankings are not assigned in the way most people assume, nor are they calculated once and fixed in place. Instead, Google continuously refines an internal model of the web, reassessing how pages relate to one another as new content emerges, links shift, and user behaviour provides additional signals. This ongoing reassessment is driven by how authority moves through the web’s structure, a process explained in structural authority flow in search systems.

A Structural Explanation expert perspective on how Google ranks search results — and why most SEO fails to influence those rankings

Rankings are continuously re-evaluated as Google updates its interpretation of your website. They are not fixed. And they are not determined by a checklist of individual factors. Google ranks search results by building and refining an internal model of the web — one that interprets websites as structured entities rather than isolated pages.

If you misunderstand that process, everything that follows becomes inefficient.

Google does not rank pages in isolation. It evaluates websites as systems.

This evaluation is based on how content, authority, and intent are organised across a domain — not simply on what exists on a single page.

Over time, Google refines its understanding through crawling, linking patterns, and user interaction signals. Once that understanding stabilises, rankings stabilise with it.

This is why many websites plateau. Not because effort has stopped, but because evaluation has reached a consistent conclusion.

If you stop reading here, understand this:

Search rankings change when interpretation changes — not when activity increases.

Google does not “read” websites in the way humans do.

It analyses structure.

It maps relationships.

It measures how authority flows between pages.

At a foundational level, the web can be understood as a network. Each page is a node. Each link is a connection. The behaviour of that network determines how visibility is assigned.

This is why understanding how Google evaluates websites is critical before attempting to influence rankings.

One of the core mechanisms behind Google’s ranking system is PageRank.

PageRank is not just a score. It is a model of how authority flows through a network. Pages do not “earn” rankings directly. They receive visibility based on how authority reaches them through links — both internal and external.

If authority is concentrated in the wrong areas of a website, high-value pages will struggle to rank regardless of how well they are optimised. This is explored further in structural authority flow in search systems.

Google continuously crawls and reassesses websites. Each crawl reinforces or adjusts its internal model. Over time, patterns emerge:

Once these patterns become consistent, rankings stabilise. This is not stagnation. It is convergence. At this point, additional content, links, or optimisation often reinforce the existing position rather than change it. This is why many organisations continue investing in SEO without seeing meaningful improvement.

Rather than thinking in terms of isolated ranking factors, it is more useful to think in terms of systems. Google’s evaluation is shaped by the interaction of multiple layers:

These elements do not operate independently. They reinforce each other. When they align, visibility increases. When they conflict, rankings become unstable or plateau.

Most SEO strategies are built on a flawed “Accumulation Model.” They assume that if you keep adding more—more content, more keywords, more backlinks—the total value of the site must eventually rise. In a probabilistic system like Google, the opposite is often true due to three specific mathematical hurdles:

In information theory, Entropy is a measure of disorder or uncertainty. When you add 50 new blog posts that are only loosely related to your core service, you aren’t “growing” your site; you are increasing its entropy.

Google seeks Confidence. It calculates a “Confidence Score” based on how consistently your internal edges (links) point toward a singular topical conclusion. If your graph is a tangled web of unrelated nodes, the “Signal” of your authority is drowned out by the “Noise” of your sprawl. Most SEO fails because it increases noise while expecting a stronger signal.

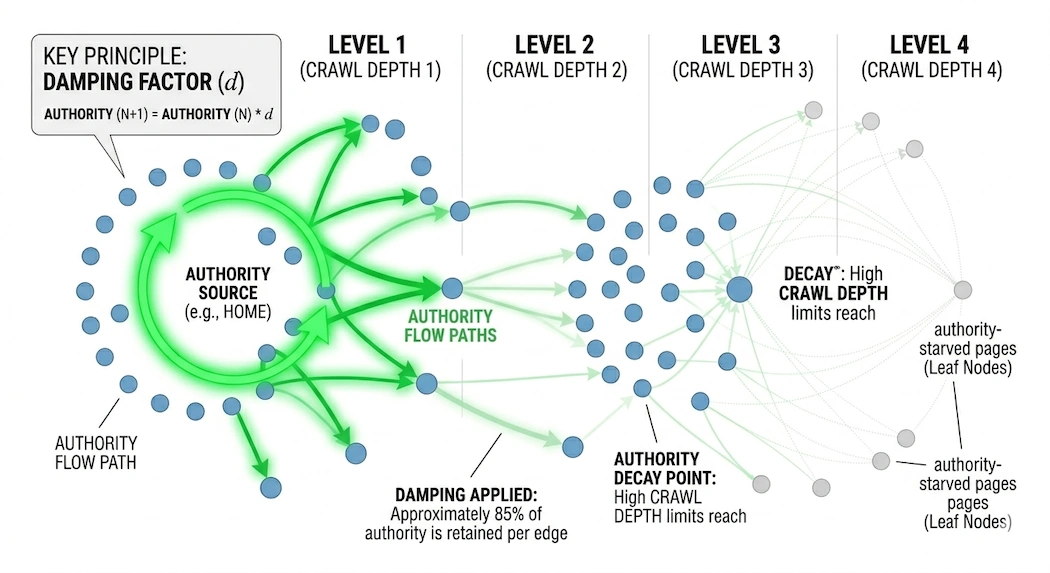

Every time a “Random Surfer” (or a Googlebot) follows a link, a portion of that page’s authority is lost. This is known as the Damping Factor (0.85 in the original PageRank algorithm).

Mathematically, authority dissipates as it travels. If your high-value service page is buried four or five clicks away from your homepage, it is receiving a fraction of a fraction of your site’s power. You can optimize the meta tags of that page forever, but if the Damping Factor has already starved the node of authority, it will never reach the first page.

As we discussed, Google’s model eventually reaches a Stationary Distribution—a state where it has decided exactly how much “weight” every page on your site deserves based on the current graph topology.

Standard SEO activity, such as tweaking a few headers or meta descriptions, is a minor perturbation that the system easily ignores. To move the needle, you don’t need more activity; you need to re-engineer the graph topology. You must prune low-value edges and create “High-Conductivity” paths to your Power Nodes to force the algorithm to recalculate the entire system.

For rankings to change, the underlying model must change. This requires shifts in how Google interprets the website:

These are not surface-level adjustments. They are structural changes. And they take time to be recognised.

Understanding how Google ranks search results is one thing. Understanding how your own website is currently being interpreted is another.

The Strategic Search Authority Review examines:

Rankings are not positions. They are outcomes of a probabilistic system. The hidden model behind your website’s rankings that drive this process, how your website is evaluated as a system and why rankings plateau.