Mathematical Model Behind Website Rankings

Author: T.G. Barker | How Google Evaluates Websites | Last reviewed: 07/05/2026 Most website owners think rankings are determined by isolated actions: adding keywords, publishing content, or building links. But modern search systems do not evaluate websites as disconnected pages. They construct probabilistic models based on repeated patterns observed across the entire structure of a site.

Most website owners think rankings are determined by isolated actions: adding keywords, publishing content, or building links. But modern search systems do not evaluate websites as disconnected pages. They construct probabilistic models based on repeated patterns observed across the entire structure of a site.

One way to visualise this process is through a simplified mathematical expression inspired by probabilistic systems and Markov-style reinforcement models:

limn→∞ eC(xn) · eA(xn) · eB(xn) = eM(xn)

In simplified terms:

- C(xn) represents content patterns,

- A(xn) represents authority and linking pathways,

- B(xn) represents behavioural reinforcement,

- M(xn) represents the learned model the search system forms about the website over time.

The important idea is not the equation itself, but what it represents.

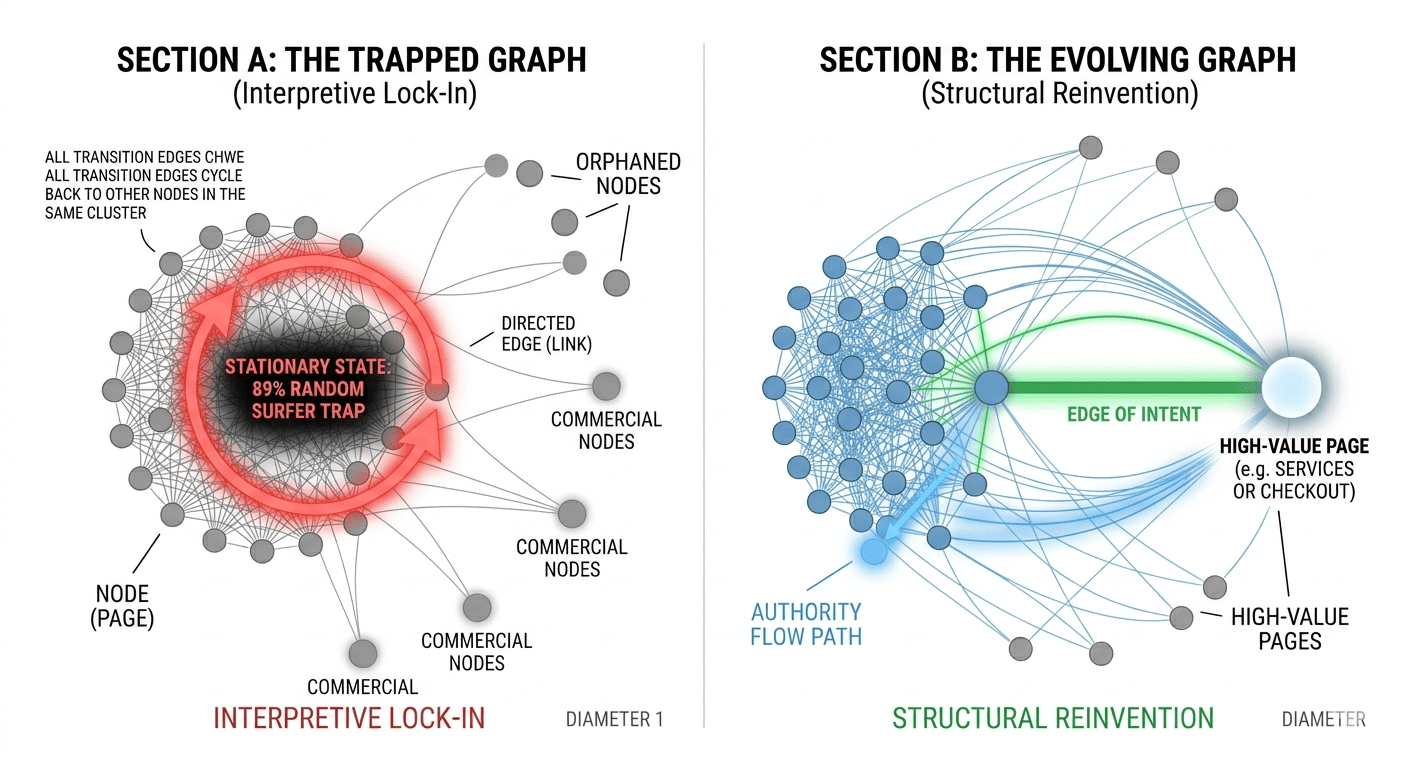

Search systems continuously observe how pages connect together, how authority flows through the structure, how users move between pages, and which areas repeatedly satisfy intent. Over time, these repeated transitions reinforce certain pathways while weakening others. Eventually the system settles into a relatively stable interpretation of the website.

Poor website rankings are not always lack of activity

The issue is often not a lack of activity. It is that new activity reinforces the same structural interpretation the system has already learned. In probabilistic terms, the model stabilises.

Whether a website is brand new or has existed for many years, search systems still face the same underlying challenge: determining how the site should be interpreted within the wider web. For new websites, the system has little historical data, so it relies heavily on emerging structural patterns, linking relationships, content focus, and behavioural signals to begin forming an initial probabilistic model. Older websites, however, often face a different issue. Over time, repeated signals can stabilise into a fixed interpretation, causing rankings and visibility to plateau as the system reinforces the same learned pathways. In both cases, the central issue is not simply optimisation, but how search systems gradually construct, reinforce, and sometimes lock into a model of what the website represents.

This is closely related to the principles explored throughout Structural Authority Flow and the wider concept of websites being interpreted as systems rather than collections of individual pages.

From this perspective, rankings are not fixed positions.

They are outcomes emerging from repeated probabilistic reinforcement across content, authority, structure, and behaviour.

Understanding how that model forms

Understanding this often more important than simply adding more SEO activity. This concept is closely related to the principles behind PageRank, where the web can be understood as a probabilistic Markov system in which repeated transitions between pages gradually reinforce the relative importance and authority of certain pathways.