Ranking Probability: Why Search Visibility Is a Probabilistic System, Not a Fixed Position

Author: T.G. Barker | How Google Evaluates Websites | Last reviewed: 14/05/2026

Why Search Visibility is an Outcome, Not a Position

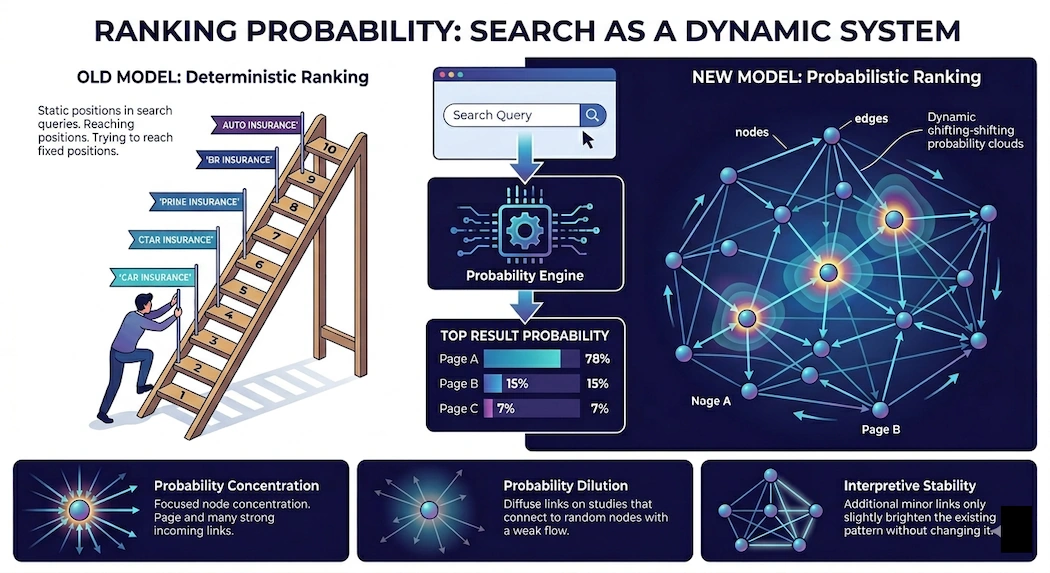

Search rankings are often discussed as though they are fixed positions waiting to be achieved. Businesses are told to improve keywords, publish more content, gain more backlinks, and eventually “reach number one.” This creates the impression that rankings behave like static targets in a predictable ladder. In reality, modern search systems operate very differently. A ranking is not simply a position. It is the visible outcome of a probabilistic evaluation system constantly assessing relationships, structure, authority, behaviour, and meaning across the web. What appears on the search results page is not a permanent truth but the most likely ordering generated from an evolving model. This distinction matters because it changes how websites should be understood, built, and optimised. Understanding how Google evaluates websites becomes essential because search systems increasingly construct internal models based on structural relationships and repeated patterns rather than isolated pages alone. Many websites fail to grow not because they lack content or technical optimisation, but because search systems have formed a stable probability model around them. Once that model stabilises, additional activity often reinforces the existing interpretation rather than changing it.

Rankings Are Outcomes, Not Assets

Most website owners think of rankings as possessions. A business might say: “We rank number three for this keyword” or “We lost our ranking.” But rankings are not owned. They are recalculated continuously. Every query triggers a fresh evaluation involving semantic interpretation, entity relationships, authority weighting, behavioural signals, internal structure, external references, freshness, contextual relevance, and probabilistic prediction. The ranking displayed is simply the most likely ordering the system currently believes will satisfy the search intent. Understanding how search systems decide ranking positions requires recognising that modern search visibility behaves more like a dynamic probability model than a fixed ladder of permanent positions. This means a page is never permanently ranked; it only has a certain probability of appearing prominently under particular conditions. That probability changes constantly, which is why rankings fluctuate even when no obvious website changes occur.

The Website as a Probability Graph

Most website owners think of rankings as possessions. A business might say: “We rank number three for this keyword” or “We lost our ranking.” But rankings are not owned. They are recalculated continuously. Every query triggers a fresh evaluation involving semantic interpretation, entity relationships, authority weighting, behavioural signals, internal structure, external references, freshness, contextual relevance, and probabilistic prediction. The ranking displayed is simply the most likely ordering the system currently believes will satisfy the search intent. Understanding how search systems see your organisation requires recognising that modern search visibility behaves more like a dynamic probability model than a fixed ladder of permanent positions. This means a page is never permanently ranked; it only has a certain probability of appearing prominently under particular conditions. That probability changes constantly, which is why rankings fluctuate even when no obvious website changes occur.

Visibility is therefore influenced not only by content quality, but by the probability that the system repeatedly encounters, reinforces, and revisits the page as part of the website’s conceptual structure. This is why internal linking matters far more than most SEO discussions acknowledge. Internal links are not merely navigation tools; they shape ranking probability itself. For a deeper explanation of this structural process, see Structural Authority Flow in Search Systems.

Probability Concentration and Structural Dominance

Strong websites tend to develop what can be described as probability concentration. Authority pathways repeatedly reinforce key pages, supporting content routes attention toward central nodes, and internal links converge around primary concepts. Understanding how authority flows through website structures becomes important because search systems increasingly rely on repeated structural reinforcement to determine which pages carry the greatest conceptual weight. Over time, the search system becomes increasingly confident about what the website represents, which pages matter most, and where authority should accumulate. This creates dominant ranking pathways. In practical terms, the probability of key pages appearing prominently increases because the structure consistently reinforces the same interpretation. Weak websites often exhibit the opposite behaviour. Authority disperses randomly, important pages compete against each other, legacy pages retain structural prominence, and supporting content loops internally without reinforcing the conceptual centre. The result is fragmented probability distribution and reduced conceptual clarity.

Why Rankings Plateau

Ranking probability also explains why websites plateau. Many websites initially grow quickly because search systems are still forming an interpretation. During this stage, new links strongly influence understanding, content rapidly shifts topical associations, and structural adjustments significantly alter ranking probability. Eventually, however, the system reaches interpretive stability. The website’s structural patterns become familiar, conceptual relationships stabilise, authority pathways become predictable, and the system becomes increasingly confident in its model. This closely relates to the hidden structural model search systems form around websites, where repeated signals gradually reinforce a stable interpretation over time. Once this happens, new activity often reinforces the existing interpretation instead of changing it. This is where many businesses become trapped. They continue publishing more content, building more backlinks, updating pages, and increasing SEO activity, yet rankings barely move. The issue is not effort; the issue is that the ranking probability distribution has stabilised.

To change rankings meaningfully, the underlying interpretive structure often has to change first. This is explored further in How Google Evaluates Websites.

Internal Linking as a Probability System

Internal linking is fundamentally a probability mechanism. Every internal link influences crawl pathways, authority movement, reinforcement frequency, and interpretive emphasis. When search systems crawl a website repeatedly, they observe which pages receive the most references, which pages redistribute authority, which pages appear central, and which pages repeatedly emerge across pathways. This repeated recurrence strengthens ranking probability. The process resembles a feedback loop where repeated pathways reinforce interpretive stability, stability increases confidence, confidence increases visibility, and visibility attracts further reinforcement. This is why a page can sometimes dominate rankings despite having less content than competitors. Its structural probability may simply be stronger because the system repeatedly encounters it as the conceptual centre of the website.

The Random Surfer Model

The original PageRank system introduced the concept of the “Random Surfer.” Imagine a user moving randomly from link to link across the web. Over time, some pages become highly probable destinations while others remain rarely encountered. This creates what mathematicians call a stationary distribution. In practical SEO terms, some pages naturally accumulate visibility probability while others leak authority away. Modern search systems are vastly more sophisticated than the original PageRank model, but the core probabilistic principles remain deeply embedded within search architecture. Search systems still evaluate transition likelihoods, pathway recurrence, structural centrality, and authority concentration.

Semantic Probability

Modern search systems do not evaluate only structure. They also evaluate semantic relationships probabilistically. The system estimates how likely concepts relate, how strongly entities connect, how consistently topics reinforce one another, and whether meaning across the site appears coherent. This is one reason keyword-based SEO became insufficient. Search systems no longer evaluate isolated words; they evaluate probability relationships between concepts. Understanding how search systems build semantic interpretations of organisations helps explain why conceptual consistency increasingly influences modern search visibility. A website discussing graph theory, structural authority, ranking systems, interpretation, probability modelling, and internal linking creates a semantic cluster. Repeated reinforcement of those related concepts increases the probability that the website becomes associated with that conceptual space. This is how topical authority emerges probabilistically rather than mechanically.

Why More Content Can Reduce Visibility

One of the most misunderstood aspects of SEO is that additional content can weaken rankings. Every new page introduces new pathways, new transitions, new authority distributions, and new interpretive possibilities. If new content does not reinforce the conceptual centre of the website, probability disperses. The website begins appearing broader but less focused. Search systems struggle to identify the dominant intent and primary authority nodes. The result is dilution. This is why some websites become larger while simultaneously becoming weaker. They increase content volume but reduce structural probability concentration.

AI Search and Probabilistic Interpretation

AI-driven search systems amplify the importance of ranking probability. Large language models and AI summarisation systems do not simply retrieve pages; they construct probabilistic representations of information. Websites increasingly compete not only for rankings, but for inclusion within AI interpretation systems. AI systems evaluate conceptual consistency, entity clarity, structural coherence, and semantic reinforcement. Websites with fragmented structures become difficult for AI systems to interpret confidently, while websites with clear probability concentration become easier to model. As content volume across the web expands exponentially, systems require stronger probabilistic confidence to determine which sources matter, which entities dominate, and which pages deserve interpretive priority.

The Strategic Implication

This changes how SEO should be approached. Traditional SEO often focuses on isolated tasks such as more content, more links, more optimisation, and more keywords. A probabilistic perspective asks different questions: where does authority accumulate, which pages dominate structural pathways, which concepts reinforce one another, where does probability leak away, and what interpretation is the system stabilising around? These are architectural questions rather than tactical ones. This is why many established websites require structural evaluation before additional optimisation. The Strategic Search Authority Review process examines how ranking probability operates across an entire website, revealing how search systems currently interpret structure, authority distribution, and conceptual focus before further optimisation reinforces the existing model.

Conclusion

Search rankings are not fixed positions waiting to be claimed. They are probabilistic outcomes generated from evolving structural, semantic, and behavioural models. Modern search systems evaluate websites as connected systems rather than isolated pages, continuously estimating probabilities of relevance, authority, intent satisfaction, conceptual centrality, and trust. The websites that succeed long term are rarely those producing the highest volume of activity. They are the websites that create the clearest probability structures. They establish conceptual centres, coherent authority pathways, strong semantic reinforcement, and stable interpretive signals. In modern search, rankings are not merely earned through optimisation. They emerge from probability.